This is part 1 of a multi part series, where we will be gathering, enriching, processing and analysing hopefully millions of open source packages from NPMJS, the NodeJS package manager.

If you're not familiar with NPMJS, it is the primary repository for over 2 million javascript packages & libraries for the NodeJS javascript runtime.

At the time of writing NPMjs offers several API's that we will use to create our dataset.

Replicate API = https://replicate.npmjs.com/_changes?include_docs=true&limit=50&since=%s

Package API = https://registry.npmjs.org/%s

Downloads API = https://api.npmjs.org/downloads/point/last-month/%s

The Replicate API is extremely important, it is a complete list of every package that has ever been listed on NPM. To interact with the replicate API you must supply a limit and a since sequence value.

Below is some sample data to illustrate how the sequence index works. It is not consistent but it does always increment, this allows us to track changes to listed packages on the API and also to resume and pause pulling down of data.

{"seq":5485,"id":"hs2app","changes":[{"rev":"62-16c9c2c95bc84bce53ed96fbb4ceede3"}],"deleted":true},

{"seq":7000,"id":"_design/app","changes":[{"rev":"717-d90479cd4db1ef794aac5c340fbaa2e0"}]},

{"seq":7135,"id":"_design/scratch","changes":[{"rev":"238-dca0e6c5feb2808a2a97b41b7a146fa8"}]},

{"seq":16551,"id":"internal-api-client","changes":[{"rev":"4-e448ab023bfa0de552a3c431f06361c7"}],"deleted":true},

{"seq":832853,"id":"vs-deploy","changes":[{"rev":"351-d9c774a2149d4aec710db185a3778fd3"}]},

{"seq":1783991,"id":"@dsr-user-dormy-noops-grout-firns/dsr-package-public-dormy-noops-grout-firns","changes":[{"rev":"2-548a31544137977dfe4de6291529bd31"}]},

{"seq":1930396,"id":"bpmn-studio","changes":[{"rev":"6313-bba1467201e9e4667edd886312cfcd9f"}]},

{"seq":2480286,"id":"rendition","changes":[{"rev":"4041-fe8e48155489d2a8512773b69abfc731"}]},Also pay attention to the deleted value, you can skip over those at this stage so you don't pollute your data set with garbage early on.

Next we will use the NPM package API, to fetch some real data from the package metadata that we just pulled down from the Replicate API.

This takes the package name, and returns a bunch of useful data

_id "vs-deploy"

_rev "353-504022348fb9733e50c81f49080ef019"

name "vs-deploy"

description "Commands for deploying f…space to a destination."

dist-tags {…}

versions {…}

readme '# vs-deploy\n\n[![Latest…hine. | `CTRL+ALT+L` |\n'

maintainers […]

time {…}

homepage "https://github.com/mkloubert/vs-deploy#readme"

keywords […]

repository {…}

author {…}

bugs {…}

license "MIT"

readmeFilename "README.md"

users {…}I also chose to add the monthly download statistic for each package, just because it might be interesting to see the popularity of certain packages as we investigate them.

It is worth noting that the replicate API is very unreliable, if you decide to implement your own scraper you will want to make sure that you errors, timeouts and failures gracefully, also you will want to change the limit parameter as you go.

Through testing I have found that for the first few hours / day of scraping you can use a limit value of 1000 to get your index counter past the very old NPM packages that have almost all been deleted, once you get to live packages, you will want to drop limit down to between 10-100 depending on your luck.

This will be much slower but it will still be faster than dealing with request failures, rate limiting and exponential back-off.

If you are limited on time, you can also start your index from a non-zero value, although you will probably miss out on quite a few packages depending on when you start.

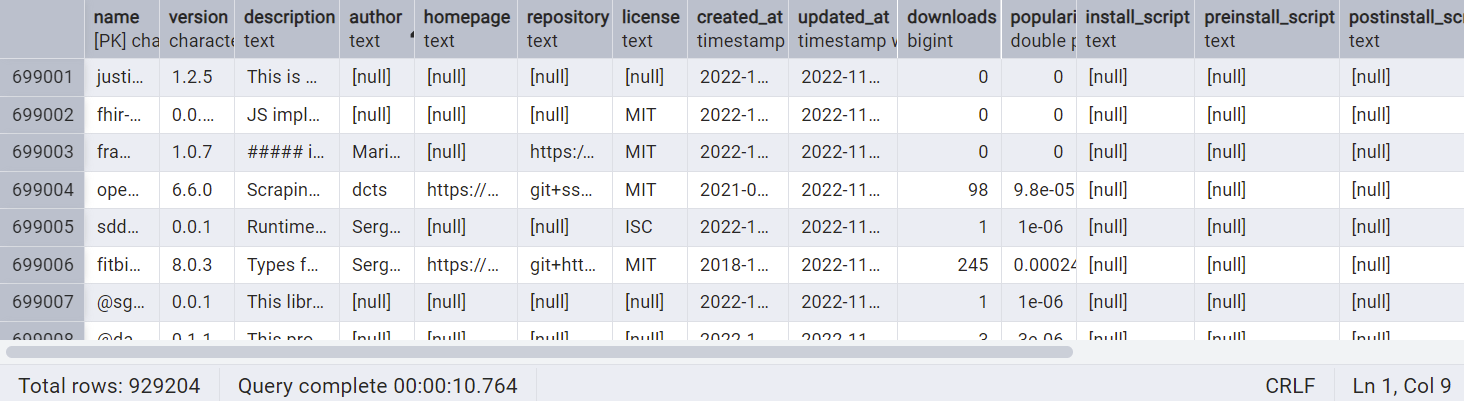

After running my scraper for ~120 hours I had a database with 900,000 records.

In the next post, we will start analysing some of the data we have found, in the mean time the scraper will continue to run.

Below is a basic scraper implemented in Golang that will scrape NPM into a postgres database, it is resumable and should be fairly easy to get running.

Thank you for reading, stay tuned for the next chapter.

go.mod:

module npm-scraper

go 1.23.4

require (

github.com/jackc/chunkreader/v2 v2.0.1 // indirect

github.com/jackc/pgconn v1.14.3 // indirect

github.com/jackc/pgio v1.0.0 // indirect

github.com/jackc/pgpassfile v1.0.0 // indirect

github.com/jackc/pgproto3/v2 v2.3.3 // indirect

github.com/jackc/pgservicefile v0.0.0-20221227161230-091c0ba34f0a // indirect

github.com/jackc/pgtype v1.14.0 // indirect

github.com/jackc/pgx/v4 v4.18.3 // indirect

github.com/jackc/puddle v1.3.0 // indirect

github.com/lib/pq v1.10.9 // indirect

github.com/mattn/go-colorable v0.1.13 // indirect

github.com/mattn/go-isatty v0.0.20 // indirect

github.com/mitchellh/colorstring v0.0.0-20190213212951-d06e56a500db // indirect

github.com/rivo/uniseg v0.4.7 // indirect

github.com/rs/zerolog v1.33.0 // indirect

github.com/schollz/progressbar/v3 v3.18.0 // indirect

golang.org/x/crypto v0.20.0 // indirect

golang.org/x/sys v0.29.0 // indirect

golang.org/x/term v0.28.0 // indirect

golang.org/x/text v0.14.0 // indirect

)

main.go:

package main

import (

"context"

"encoding/json"

"fmt"

"io"

"math"

"net/http"

"os"

"os/signal"

"strings"

"sync"

"syscall"

"time"

"github.com/jackc/pgx/v4/pgxpool"

"github.com/rs/zerolog"

"github.com/rs/zerolog/log"

)

const (

// NPM Registry endpoints

changesAPIURL = "https://replicate.npmjs.com/_changes?include_docs=true&limit=50&since=%s"

packageAPIFormat = "https://registry.npmjs.org/%s"

downloadsAPIURL = "https://api.npmjs.org/downloads/point/last-month/%s"

userAgent = "Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:136.0) Gecko/20100101 Firefox/136.0"

// Database constants

checkpointFile = "checkpoint.json"

batchSize = 10 // Small batch size for changes API to avoid rate limits

maxRetries = 3

retryDelay = 5 * time.Second

concurrency = 5

apiRequestDelay = 2 * time.Second // Delay between API requests to avoid rate limits

)

// Database configuration - could be loaded from env vars

var dbConfig = struct {

Host string

Port int

User string

Password string

DBName string

}{

Host: "localhost",

Port: 5432,

User: "ctuser",

Password: "password",

DBName: "npmjs",

}

// Package represents essential NPM package data

// Package represents essential NPM package data

type Package struct {

Name string `json:"name"`

Version string `json:"version"`

Description string `json:"description"`

Author string `json:"author"`

Homepage string `json:"homepage"`

Repository string `json:"repository"`

License string `json:"license"`

CreatedAt time.Time `json:"created"`

UpdatedAt time.Time `json:"modified"`

Downloads int64 `json:"downloads"`

InstallScript string `json:"install_script"`

PreinstallScript string `json:"preinstall_script"`

PostinstallScript string `json:"postinstall_script"`

}

// ChangesResponse represents the response from the NPM changes API

type ChangesResponse struct {

Results []ChangeResult `json:"results"`

LastSeq string `json:"last_seq"`

}

// ChangeResult represents a single change in the registry

type ChangeResult struct {

ID string `json:"id"`

Changes []ChangeInfo `json:"changes"`

Doc *PackageDoc `json:"doc"`

}

// ChangeInfo contains information about a change

type ChangeInfo struct {

Rev string `json:"rev"`

}

// PackageDoc represents a package document from the registry

// Package type fixes for the time field

type PackageDoc struct {

ID string `json:"_id"`

Rev string `json:"_rev"`

Name string `json:"name"`

Description string `json:"description"`

DistTags map[string]string `json:"dist-tags"`

Versions map[string]interface{} `json:"versions"`

Readme string `json:"readme"`

Maintainers []Maintainer `json:"maintainers"`

Time map[string]string `json:"time"` // Changed from string to map

Author interface{} `json:"author"`

Repository interface{} `json:"repository"`

Homepage string `json:"homepage"`

License interface{} `json:"license"`

Bugs interface{} `json:"bugs"`

}

// Maintainer represents a package maintainer

type Maintainer struct {

Name string `json:"name"`

Email string `json:"email"`

}

// DownloadInfo represents the download count response

type DownloadInfo struct {

Downloads int64 `json:"downloads"`

Start string `json:"start"`

End string `json:"end"`

Package string `json:"package"`

}

// Checkpoint tracks progress to allow resuming

type Checkpoint struct {

LastSequence string `json:"last_sequence"`

ProcessedPackages int `json:"processed_packages"`

FailedPackages map[string]string `json:"failed_packages"`

UpdatedAt time.Time `json:"updated_at"`

}

// Scraper manages the NPM scraping process

type Scraper struct {

db *pgxpool.Pool

client *http.Client

checkpoint Checkpoint

packageQueue chan string

wg sync.WaitGroup

ctx context.Context

cancel context.CancelFunc

checkpointLock sync.Mutex

}

func main() {

// Configure logging

zerolog.TimeFieldFormat = zerolog.TimeFormatUnix

log.Logger = log.Output(zerolog.ConsoleWriter{Out: os.Stdout})

// Create context that can be canceled

ctx, cancel := context.WithCancel(context.Background())

defer cancel()

// Initialize the scraper

scraper, err := NewScraper(ctx, cancel)

if err != nil {

log.Fatal().Err(err).Msg("Failed to initialize scraper")

}

defer scraper.Close()

// Handle graceful shutdown

signalChan := make(chan os.Signal, 1)

signal.Notify(signalChan, syscall.SIGINT, syscall.SIGTERM)

go func() {

<-signalChan

log.Info().Msg("Shutdown signal received, finishing in-progress tasks...")

cancel()

}()

// Run the scraper

if err := scraper.Run(); err != nil {

log.Fatal().Err(err).Msg("Failed during scraping")

}

}

// NewScraper initializes a new NPM Scraper

func NewScraper(ctx context.Context, cancel context.CancelFunc) (*Scraper, error) {

// Setup HTTP client with reasonable timeouts and a custom User-Agent

transport := &http.Transport{

MaxIdleConns: 100,

MaxIdleConnsPerHost: 100,

IdleConnTimeout: 90 * time.Second,

}

client := &http.Client{

Timeout: 30 * time.Second,

Transport: &userAgentTransport{

transport: transport,

userAgent: userAgent,

},

}

// Connect to the database

connStr := fmt.Sprintf("postgres://%s:%s@%s:%d/%s?sslmode=disable",

dbConfig.User, dbConfig.Password, dbConfig.Host, dbConfig.Port, dbConfig.DBName)

dbpool, err := pgxpool.Connect(ctx, connStr)

if err != nil {

return nil, fmt.Errorf("unable to connect to database: %w", err)

}

// Initialize the scraper

s := &Scraper{

db: dbpool,

client: client,

packageQueue: make(chan string, concurrency*2),

ctx: ctx,

cancel: cancel,

checkpointLock: sync.Mutex{},

}

// Initialize database tables

if err := s.initializeDatabase(); err != nil {

return nil, fmt.Errorf("failed to initialize database: %w", err)

}

// Load the checkpoint or create a new one

if err := s.loadCheckpoint(); err != nil {

return nil, fmt.Errorf("failed to load checkpoint: %w", err)

}

return s, nil

}

// Add this custom transport struct to set User-Agent on all requests

type userAgentTransport struct {

transport http.RoundTripper

userAgent string

}

func (t *userAgentTransport) RoundTrip(req *http.Request) (*http.Response, error) {

req.Header.Set("User-Agent", t.userAgent)

return t.transport.RoundTrip(req)

}

// Close closes the database connection

func (s *Scraper) Close() {

s.db.Close()

}

// Run executes the scraping process

func (s *Scraper) Run() error {

log.Info().Msg("Starting NPM registry scraper")

// Start workers for processing packages

for i := 0; i < concurrency; i++ {

s.wg.Add(1)

go s.packageWorker(i)

}

// If we're continuing from a previous run with failed packages

if len(s.checkpoint.FailedPackages) > 0 {

log.Info().Int("count", len(s.checkpoint.FailedPackages)).Msg("Retrying failed packages")

for pkg := range s.checkpoint.FailedPackages {

select {

case <-s.ctx.Done():

goto Cleanup // Use goto to jump to cleanup

case s.packageQueue <- pkg:

// Package queued for retry

}

}

}

// Continuously try to fetch packages, never giving up

for {

select {

case <-s.ctx.Done():

goto Cleanup

default:

err := s.fetchPackagesFromChanges()

if err != nil {

log.Error().Err(err).Msg("Error fetching packages, will retry after delay")

// Wait before retrying to avoid hammering the API

time.Sleep(30 * time.Second)

continue

}

// If fetchPackagesFromChanges returns without error, it means we're done

// In the new implementation, this shouldn't happen as it now runs forever

log.Info().Msg("Finished fetching all packages")

goto Cleanup

}

}

Cleanup:

// Wait for all workers to complete

close(s.packageQueue)

s.wg.Wait()

log.Info().Int("processed", s.checkpoint.ProcessedPackages).Msg("NPM registry scraping completed")

return nil

}

// initializeDatabase creates necessary tables if they don't exist

func (s *Scraper) initializeDatabase() error {

_, err := s.db.Exec(s.ctx, `

CREATE TABLE IF NOT EXISTS npm_packages (

name VARCHAR(255) PRIMARY KEY,

version VARCHAR(100),

description TEXT DEFAULT NULL,

author TEXT DEFAULT NULL,

homepage TEXT DEFAULT NULL,

repository TEXT DEFAULT NULL,

license TEXT DEFAULT NULL,

created_at TIMESTAMP,

updated_at TIMESTAMP,

downloads BIGINT,

popularity_score FLOAT,

install_script TEXT DEFAULT NULL,

preinstall_script TEXT DEFAULT NULL,

postinstall_script TEXT DEFAULT NULL,

last_updated TIMESTAMP DEFAULT NOW()

);

CREATE INDEX IF NOT EXISTS npm_packages_downloads_idx ON npm_packages(downloads DESC);

CREATE INDEX IF NOT EXISTS npm_packages_popularity_idx ON npm_packages(popularity_score DESC);

CREATE INDEX IF NOT EXISTS npm_packages_has_install_script_idx ON npm_packages((install_script IS NOT NULL));

CREATE INDEX IF NOT EXISTS npm_packages_has_preinstall_script_idx ON npm_packages((preinstall_script IS NOT NULL));

CREATE INDEX IF NOT EXISTS npm_packages_has_postinstall_script_idx ON npm_packages((postinstall_script IS NOT NULL));

`)

return err

}

// loadCheckpoint loads the previous checkpoint or creates a new one

func (s *Scraper) loadCheckpoint() error {

file, err := os.Open(checkpointFile)

if os.IsNotExist(err) {

s.checkpoint = Checkpoint{

LastSequence: "0", // Start from the beginning

FailedPackages: make(map[string]string),

UpdatedAt: time.Now(),

}

return nil

} else if err != nil {

return err

}

defer file.Close()

decoder := json.NewDecoder(file)

if err := decoder.Decode(&s.checkpoint); err != nil {

return err

}

if s.checkpoint.FailedPackages == nil {

s.checkpoint.FailedPackages = make(map[string]string)

}

log.Info().Str("lastSeq", s.checkpoint.LastSequence).Int("processed", s.checkpoint.ProcessedPackages).Msg("Loaded checkpoint")

return nil

}

// saveCheckpoint saves the current progress to disk

func (s *Scraper) saveCheckpoint() error {

s.checkpointLock.Lock()

defer s.checkpointLock.Unlock()

s.checkpoint.UpdatedAt = time.Now()

file, err := os.Create(checkpointFile)

if err != nil {

return err

}

defer file.Close()

encoder := json.NewEncoder(file)

encoder.SetIndent("", " ")

return encoder.Encode(s.checkpoint)

}

// fetchPackagesFromChanges uses the changes feed to discover NPM packages

func (s *Scraper) fetchPackagesFromChanges() error {

log.Info().Msg("Fetching NPM packages using changes feed")

seqID := s.checkpoint.LastSequence

if seqID == "" {

seqID = "0" // Start from the beginning

}

totalQueued := 0

consecutiveErrorCount := 0

for {

// Check if context is done

select {

case <-s.ctx.Done():

log.Info().Msg("Package fetching interrupted by context cancellation")

return nil

default:

}

changesURL := fmt.Sprintf(changesAPIURL, seqID)

log.Info().Str("since", seqID).Msg("Fetching changes batch")

// Respect rate limits with adaptive delay based on error count

delay := apiRequestDelay

if consecutiveErrorCount > 0 {

// Exponential backoff with maximum of 5 minutes between retries

delay = time.Duration(math.Min(300, float64(apiRequestDelay.Seconds()*math.Pow(2, float64(consecutiveErrorCount))))) * time.Second

}

log.Info().Dur("delay", delay).Int("errors", consecutiveErrorCount).Msg("Waiting before next request")

time.Sleep(delay)

// Create the request

req, err := http.NewRequestWithContext(s.ctx, "GET", changesURL, nil)

if err != nil {

log.Error().Err(err).Msg("Failed to create request, will retry")

consecutiveErrorCount++

continue // Just retry

}

// Make the request with retries

var resp *http.Response

var responseBody []byte

// Attempt the request with our own retry logic

success := false

for attempt := 0; attempt < maxRetries; attempt++ {

if attempt > 0 {

retryBackoff := retryDelay * time.Duration(1<<uint(attempt-1)) // Exponential backoff

log.Info().Int("attempt", attempt+1).Dur("backoff", retryBackoff).Msg("Retrying request")

select {

case <-s.ctx.Done():

return nil

case <-time.After(retryBackoff):

// Continue after delay

}

}

// Create a fresh copy of the request for each attempt

reqCopy := req.Clone(s.ctx)

resp, err = s.client.Do(reqCopy)

if err == nil && resp.StatusCode == http.StatusOK {

// Read the response body

responseBody, err = io.ReadAll(resp.Body)

resp.Body.Close()

if err == nil {

success = true

break

}

log.Error().Err(err).Msg("Failed to read response body")

} else {

if resp != nil {

body, _ := io.ReadAll(resp.Body)

log.Error().Err(err).Int("status", resp.StatusCode).Str("body", string(body)).

Msg("Request failed")

resp.Body.Close()

} else if err != nil {

log.Error().Err(err).Msg("Request error")

}

}

}

if !success {

log.Error().Str("url", changesURL).Msg("All request attempts failed, backing off more before retrying")

consecutiveErrorCount++

// Save the checkpoint in case we restart

if err := s.saveCheckpoint(); err != nil {

log.Error().Err(err).Msg("Failed to save checkpoint after errors")

}

continue // Try again with longer backoff

}

// Parse the response

var rawResponse map[string]interface{}

if err := json.Unmarshal(responseBody, &rawResponse); err != nil {

log.Error().Err(err).Msg("Failed to parse changes response, will retry")

consecutiveErrorCount++

continue

}

// Extract results and last_seq

results, ok := rawResponse["results"].([]interface{})

if !ok {

log.Error().Msg("Results field not found or not an array, will retry")

consecutiveErrorCount++

continue

}

// Reset error counter since we got a valid response

consecutiveErrorCount = 0

// Check if we got any results

if len(results) == 0 {

log.Info().Msg("No new changes yet, waiting longer before next check")

// If we're caught up, use a longer wait time (30 seconds)

time.Sleep(30 * time.Second)

continue

}

// Queue packages from this batch

queuedFromBatch := 0

for _, rawChange := range results {

change, ok := rawChange.(map[string]interface{})

if !ok {

continue // Skip invalid changes

}

// Skip deleted packages - ONLY if deleted is true

deleted, ok := change["deleted"].(bool)

if ok && deleted == true {

log.Debug().Str("id", change["id"].(string)).Msg("Skipping deleted package")

continue // Skip explicitly deleted packages

}

id, ok := change["id"].(string)

if !ok || id[:1] == "_" {

continue // Skip design docs or invalid IDs

}

// Check if doc exists

doc, hasDoc := change["doc"].(map[string]interface{})

if !hasDoc || doc == nil {

continue // Skip missing docs

}

// Check for _deleted field in doc - ONLY if _deleted is true

docDeleted, ok := doc["_deleted"].(bool)

if ok && docDeleted == true {

log.Debug().Str("id", id).Msg("Skipping package with _deleted=true")

continue // Skip docs marked as deleted

}

// Queue package for processing

select {

case <-s.ctx.Done():

return nil

case s.packageQueue <- id:

queuedFromBatch++

}

}

totalQueued += queuedFromBatch

log.Info().Int("batch", queuedFromBatch).Int("total_queued", totalQueued).Msg("Queued packages from changes")

// Update the sequence ID for the next batch

lastSeq, ok := rawResponse["last_seq"].(string)

if !ok {

// Handle case where last_seq might be a number or an object

if lastSeqNum, ok := rawResponse["last_seq"].(float64); ok {

lastSeq = fmt.Sprintf("%d", int(lastSeqNum))

} else {

lastSeqObj, ok := rawResponse["last_seq"].(map[string]interface{})

if ok && lastSeqObj["seq"] != nil {

if seqNum, ok := lastSeqObj["seq"].(float64); ok {

lastSeq = fmt.Sprintf("%d", int(seqNum))

} else if seqStr, ok := lastSeqObj["seq"].(string); ok {

lastSeq = seqStr

}

}

}

}

// Only update sequence if we got a valid value

if lastSeq != "" && lastSeq != seqID {

log.Info().Str("old_seq", seqID).Str("new_seq", lastSeq).Msg("Updating sequence ID")

seqID = lastSeq

s.checkpoint.LastSequence = seqID

// Save checkpoint occasionally to track progress

if totalQueued%50 == 0 {

if err := s.saveCheckpoint(); err != nil {

log.Error().Err(err).Msg("Failed to save checkpoint during changes fetching")

}

}

}

}

}

// packageWorker processes packages from the queue

func (s *Scraper) packageWorker(id int) {

defer s.wg.Done()

log.Info().Int("worker", id).Msg("Package worker started")

for pkgName := range s.packageQueue {

select {

case <-s.ctx.Done():

log.Info().Int("worker", id).Msg("Worker shutting down")

return

default:

if err := s.processPackage(pkgName); err != nil {

log.Error().Err(err).Str("package", pkgName).Int("worker", id).Msg("Failed to process package")

s.checkpointLock.Lock()

s.checkpoint.FailedPackages[pkgName] = err.Error()

s.checkpointLock.Unlock()

} else {

s.checkpointLock.Lock()

delete(s.checkpoint.FailedPackages, pkgName)

s.checkpoint.ProcessedPackages++

s.checkpointLock.Unlock()

// Log progress

if s.checkpoint.ProcessedPackages%100 == 0 {

log.Info().Int("processed", s.checkpoint.ProcessedPackages).Msg("Progress update")

}

}

// Save checkpoint periodically

if s.checkpoint.ProcessedPackages%100 == 0 {

if err := s.saveCheckpoint(); err != nil {

log.Error().Err(err).Int("worker", id).Msg("Failed to save checkpoint")

}

}

}

}

log.Info().Int("worker", id).Msg("Worker finished")

}

// processPackage fetches and stores a single NPM package

func (s *Scraper) processPackage(pkgName string) error {

var pkg Package

var popularityScore float64

var err error

// Retry logic for API calls

for retry := 0; retry < maxRetries; retry++ {

if retry > 0 {

log.Info().Str("package", pkgName).Int("attempt", retry+1).Msg("Retrying package fetch")

time.Sleep(retryDelay * time.Duration(retry)) // Exponential backoff

}

// Fetch package details

pkg, popularityScore, err = s.fetchPackage(pkgName)

if err == nil {

break

}

// Don't retry 404 errors - package likely doesn't exist anymore

if strings.Contains(err.Error(), "status 404") {

log.Warn().Str("package", pkgName).Msg("Package not found (404), skipping")

return nil // Return nil to avoid marking as failed

}

log.Error().Err(err).Str("package", pkgName).Int("attempt", retry+1).Msg("Failed to fetch package")

}

if err != nil {

return fmt.Errorf("all attempts to fetch package %s failed: %w", pkgName, err)

}

// Store the package in the database

return s.storePackage(pkg, popularityScore)

}

// fetchPackage retrieves package details from NPM

// fetchPackage retrieves package details from NPM

func (s *Scraper) fetchPackage(pkgName string) (Package, float64, error) {

var pkg Package

var popularityScore float64

// Fetch package metadata

pkgURL := fmt.Sprintf(packageAPIFormat, pkgName)

resp, err := s.client.Get(pkgURL)

if err != nil {

return pkg, 0, fmt.Errorf("failed to fetch package %s: %w", pkgName, err)

}

defer resp.Body.Close()

if resp.StatusCode != http.StatusOK {

return pkg, 0, fmt.Errorf("package API returned status %d for %s", resp.StatusCode, pkgName)

}

// Parse the JSON response

var pkgData map[string]interface{}

if err := json.NewDecoder(resp.Body).Decode(&pkgData); err != nil {

return pkg, 0, fmt.Errorf("failed to decode package data for %s: %w", pkgName, err)

}

// Extract basic package information

pkg.Name = pkgName

// Handle version info

if distTags, ok := pkgData["dist-tags"].(map[string]interface{}); ok {

if latest, ok := distTags["latest"].(string); ok {

pkg.Version = latest

}

}

// Extract description

if desc, ok := pkgData["description"].(string); ok {

pkg.Description = desc

}

// Extract author information

if author, ok := pkgData["author"].(map[string]interface{}); ok {

if name, ok := author["name"].(string); ok {

pkg.Author = name

}

} else if authorStr, ok := pkgData["author"].(string); ok {

pkg.Author = authorStr

}

// Extract homepage

if homepage, ok := pkgData["homepage"].(string); ok {

pkg.Homepage = homepage

}

// Extract repository

if repo, ok := pkgData["repository"].(map[string]interface{}); ok {

if url, ok := repo["url"].(string); ok {

pkg.Repository = url

}

} else if repoStr, ok := pkgData["repository"].(string); ok {

pkg.Repository = repoStr

}

// Extract license

if license, ok := pkgData["license"].(string); ok {

pkg.License = license

} else if licenses, ok := pkgData["licenses"].([]interface{}); ok && len(licenses) > 0 {

if licenseObj, ok := licenses[0].(map[string]interface{}); ok {

if licType, ok := licenseObj["type"].(string); ok {

pkg.License = licType

}

}

}

// Extract time information

if times, ok := pkgData["time"].(map[string]interface{}); ok {

if created, ok := times["created"].(string); ok {

if t, err := time.Parse(time.RFC3339, created); err == nil {

pkg.CreatedAt = t

}

}

if modified, ok := times["modified"].(string); ok {

if t, err := time.Parse(time.RFC3339, modified); err == nil {

pkg.UpdatedAt = t

}

}

}

// Extract installation scripts from the latest version

if versions, ok := pkgData["versions"].(map[string]interface{}); ok && pkg.Version != "" {

if latestVersion, ok := versions[pkg.Version].(map[string]interface{}); ok {

if scripts, ok := latestVersion["scripts"].(map[string]interface{}); ok {

// Get install script

if install, ok := scripts["install"].(string); ok {

pkg.InstallScript = install

log.Debug().Str("package", pkgName).Str("script", "install").Str("content", install).Msg("Found install script")

}

// Get preinstall script

if preinstall, ok := scripts["preinstall"].(string); ok {

pkg.PreinstallScript = preinstall

log.Debug().Str("package", pkgName).Str("script", "preinstall").Str("content", preinstall).Msg("Found preinstall script")

}

// Get postinstall script

if postinstall, ok := scripts["postinstall"].(string); ok {

pkg.PostinstallScript = postinstall

log.Debug().Str("package", pkgName).Str("script", "postinstall").Str("content", postinstall).Msg("Found postinstall script")

}

}

}

}

// Fetch download count

downloads, downloadErr := s.fetchDownloadCount(pkgName)

if downloadErr != nil {

log.Warn().Err(downloadErr).Str("package", pkgName).Msg("Failed to fetch download count, using 0")

pkg.Downloads = 0

} else {

pkg.Downloads = downloads

// Use downloads as a proxy for popularity

// Normalize: most packages have <100k downloads, but some have millions

// This gives a rough popularity score between 0-1

popularityScore = math.Min(1.0, float64(downloads)/1000000.0)

}

return pkg, popularityScore, nil

}

// fetchDownloadCount retrieves the download statistics for a package

func (s *Scraper) fetchDownloadCount(pkgName string) (int64, error) {

url := fmt.Sprintf(downloadsAPIURL, pkgName)

resp, err := s.client.Get(url)

if err != nil {

return 0, fmt.Errorf("failed to fetch download stats: %w", err)

}

defer resp.Body.Close()

// Handle 404s gracefully - package likely doesn't exist or has no stats

if resp.StatusCode == http.StatusNotFound {

return 0, nil // Return 0 downloads for 404s

}

if resp.StatusCode != http.StatusOK {

body, _ := io.ReadAll(resp.Body)

return 0, fmt.Errorf("downloads API returned status %d: %s", resp.StatusCode, string(body))

}

var downloadInfo DownloadInfo

if err := json.NewDecoder(resp.Body).Decode(&downloadInfo); err != nil {

return 0, fmt.Errorf("failed to decode download stats: %w", err)

}

return downloadInfo.Downloads, nil

}

// storePackage saves package information to the database

func (s *Scraper) storePackage(pkg Package, popularityScore float64) error {

// Convert empty strings to nil for SQL NULL values

var description, homepage, repository, license, author interface{}

var installScript, preinstallScript, postinstallScript interface{}

// Handle all text fields - convert empty strings to nil for SQL NULL

// Also check for "[null]" string which might be mistakenly stored

if pkg.Description == "" || pkg.Description == "[null]" {

description = nil

} else {

description = pkg.Description

}

if pkg.Homepage == "" || pkg.Homepage == "[null]" {

homepage = nil

} else {

homepage = pkg.Homepage

}

if pkg.Repository == "" || pkg.Repository == "[null]" {

repository = nil

} else {

repository = pkg.Repository

}

if pkg.License == "" || pkg.License == "[null]" {

license = nil

} else {

license = pkg.License

}

if pkg.Author == "" || pkg.Author == "[null]" {

author = nil

} else {

author = pkg.Author

}

// Handle script fields

if pkg.InstallScript == "" || pkg.InstallScript == "[null]" {

installScript = nil

} else {

installScript = pkg.InstallScript

}

if pkg.PreinstallScript == "" || pkg.PreinstallScript == "[null]" {

preinstallScript = nil

} else {

preinstallScript = pkg.PreinstallScript

}

if pkg.PostinstallScript == "" || pkg.PostinstallScript == "[null]" {

postinstallScript = nil

} else {

postinstallScript = pkg.PostinstallScript

}

// Debug log to check what we're actually storing

log.Debug().

Str("package", pkg.Name).

Interface("homepage", homepage).

Msg("Storing package with homepage value")

_, err := s.db.Exec(s.ctx, `

INSERT INTO npm_packages

(name, version, description, author, homepage, repository, license, created_at, updated_at, downloads, popularity_score, install_script, preinstall_script, postinstall_script, last_updated)

VALUES ($1, $2, $3, $4, $5, $6, $7, $8, $9, $10, $11, $12, $13, $14, NOW())

ON CONFLICT (name)

DO UPDATE SET

version = $2,

description = $3,

author = $4,

homepage = $5,

repository = $6,

license = $7,

created_at = $8,

updated_at = $9,

downloads = $10,

popularity_score = $11,

install_script = $12,

preinstall_script = $13,

postinstall_script = $14,

last_updated = NOW()

`,

pkg.Name, pkg.Version, description, author, homepage, repository,

license, pkg.CreatedAt, pkg.UpdatedAt, pkg.Downloads, popularityScore,

installScript, preinstallScript, postinstallScript)

if err != nil {

return fmt.Errorf("failed to store package %s in database: %w", pkg.Name, err)

}

return nil

}